Stephen Hawking cautioned that AI could result in the demise of humanity in the years leading to his passing

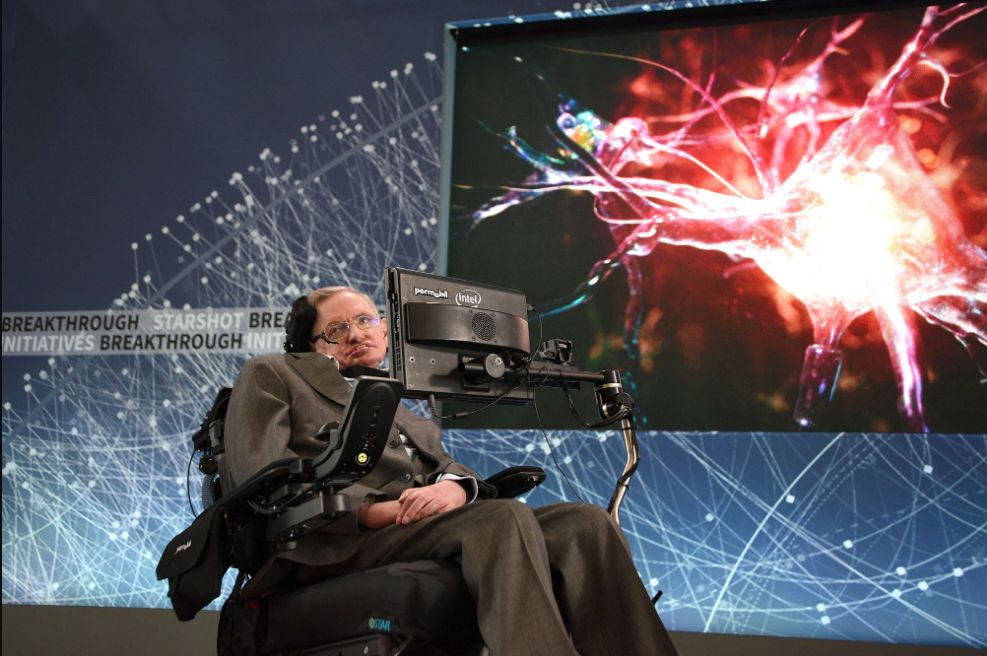

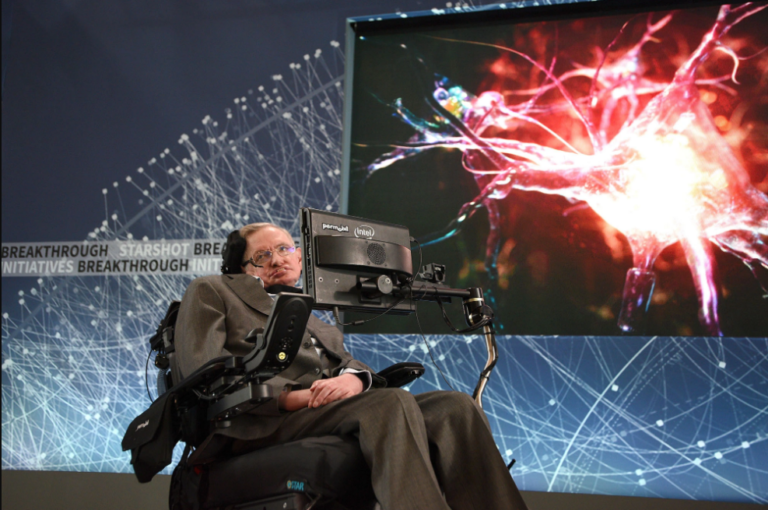

Prior to Elon Musk and Steve Wozniak signing a letter cautioning against the “profound risks” of artificial intelligence, British theoretical physicist Stephen Hawking had been expressing his concerns about the technology’s rapid evolution. In a 2014 interview with the BBC, Hawking stated that the development of full artificial intelligence had the potential to bring about the end of humanity. Despite his critical remarks on AI, Hawking himself relied on a basic form of the technology to communicate due to his debilitating illness, amyotrophic lateral sclerosis (ALS). In 1985, Hawking lost the ability to speak and used a speech-generating device run by Intel that used facial movements to select words or letters that were synthesized to speech.

When asked about the potential for revamping this voice technology, Hawking commented that while very basic forms of AI had already demonstrated power, creating systems that were equal to or greater than human intelligence could be catastrophic for the human race. He believed that such systems could quickly redesign themselves at an ever-increasing rate, leading to unpredictable and potentially disastrous outcomes.

Bryan Bedder

Hawking further added that humans, who are subject to slow biological evolution, would be left behind and replaced by artificial intelligence. After his death, Hawking’s final book titled “Brief Answers to the Big Questions” was published. The book addressed a range of topics, including Hawking’s position on the non-existence of God, his prediction that humans would one day inhabit space, and his concerns about genetic engineering and global warming. The book also highlighted Hawking’s belief that artificial intelligence was a major concern, as he predicted that computers would likely surpass human intelligence within the next century.

Getty Images for The Met Museum/

In his writing, Hawking warned that there could be a point where machines surpass human intelligence in a significant way, leading to an “intelligence explosion” that could leave humans behind. He stressed the importance of training AI to align with human goals, cautioning against underestimating the potential risks. Hawking’s concerns were shared by other tech leaders like Elon Musk and Steve Wozniak, who signed a letter earlier this year calling for a pause on building more powerful AI systems. Dismissing the idea of highly intelligent machines as mere science fiction could be a grave mistake, according to Hawking.

Getty Images

The letter, which was published by nonprofit Future of Life, states that AI systems possessing intelligence that rivals that of humans could potentially pose significant risks to society and humanity, as has been demonstrated by extensive research and recognized by leading AI laboratories. In January, OpenAI’s ChatGPT became the chatbot with the fastest-growing user base, with 100 million active monthly users, as people worldwide sought to engage with the platform, which generates human-like conversations in response to prompts. In March, the laboratory launched GPT-4, the latest version of the platform, despite calls for a pause in research by AI labs striving to create technology that surpasses GPT-4. The release of the system was a watershed moment that had a ripple effect throughout the tech industry, spurring various companies to compete in developing their own AI systems.

Google is working on revamping its search engine and developing a new one based on AI, while Microsoft has released a new version of its Bing search engine that is powered by AI and has been described as a user’s “copilot” on the web. Elon Musk has also announced plans to launch his own AI system, which he has dubbed a “maximum truth-seeking” rival to the others.

In the year leading up to his death, Stephen Hawking warned of the potential risks associated with AI, stating that the technology could be the worst event in human history if not properly prepared for and managed. However, he also acknowledged that the future impact of AI remains uncertain and that it could potentially benefit humanity if developed in the right way. In a speech at the Web Summit technology conference in 2017, Hawking emphasized that the outcome of AI’s development could either be the most significant event in human history or the most catastrophic.

Do not forget to share your opinion with us to provide you with the best posts !

0 Comments